Unit 5: Impact of Computing — Effects, Access, and Ethics

Unit 5: Impact of Computing — Effects, Access, and Ethics

Computing innovations have revolutionized how we live, work, and communicate. However, these innovations are rarely purely "good" or purely "bad." In AP Computer Science Principles, you must analyze these innovations through multiple lenses—examining their unintended consequences, the inequities in who can access them, and the biases embedded within them.

The Nature of Computing Innovations (Topic 5.1)

Every computing innovation—whether a physical device (like a self-driving car) or a non-physical concept (like social media algorithms)—has impacts that ripple through society. To analyze them, we look at the intended purpose versus the unintended consequences.

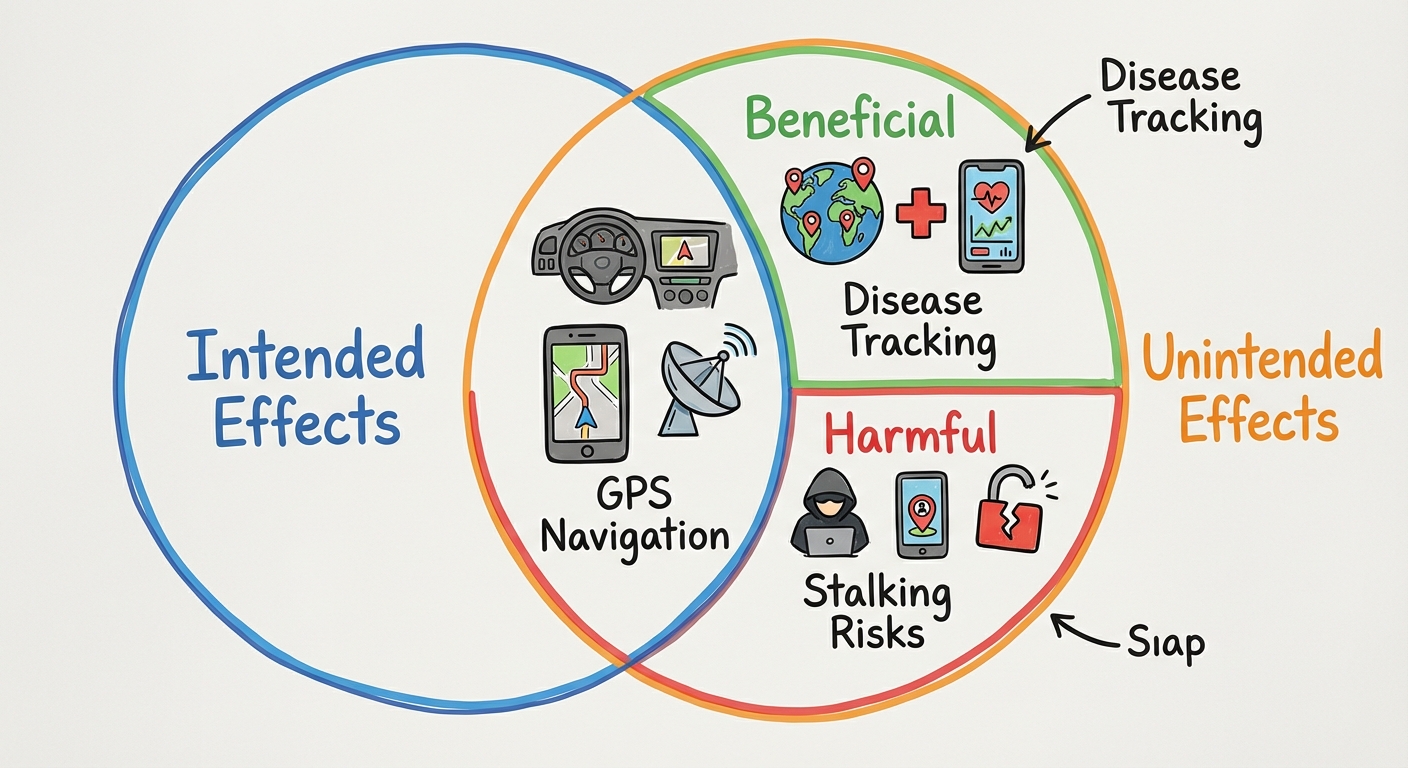

Intended vs. Unintended Effects

- Intended Effects: The problem the tool was designed to solve.

- Example: GPS was created to help people navigate locations accurately.

- Unintended Effects: Outcomes that were not planned for by the creators. These can be beneficial OR harmful.

- Unintended Beneficial Example: GPS data is now used by epidemiologists to track the spread of diseases (contact tracing).

- Unintended Harmful Example: GPS enables non-consensual tracking or stalking of individuals.

Perspective Matters

A key concept in Big Idea 5 is that a single effect can be viewed as beneficial by one group and harmful by another.

| Innovation | Beneficial Perspective | Harmful Perspective |

|---|---|---|

| Targeted Advertising | Small businesses reach the exact customers interested in their niche products, saving marketing money. | Users feel their privacy is invaded and their personal data is being commodified without explicit control. |

| Automation/Robotics | Companies increase efficiency, lower costs, and remove humans from dangerous environments. | Workers in those sectors face displacement and unemployment. |

| Citizen Science Apps | Scientists get massive amounts of data from the public to solve complex problems (e.g., identifying bird species). | Participants may unwittingly expose their location or habits if data security is weak. |

Categories of Impact

When analyzing an innovation on the AP exam, consider these dimensions:

- Economic: Does it create/destroy jobs? Does it create new markets?

- Cultural: Does it change how people interact or celebrate? (e.g., translation apps connecting cultures vs. eroding local dialects).

- Legal/Ethical: Does it violate privacy laws? Who is liable if it fails?

The Digital Divide (Topic 5.2)

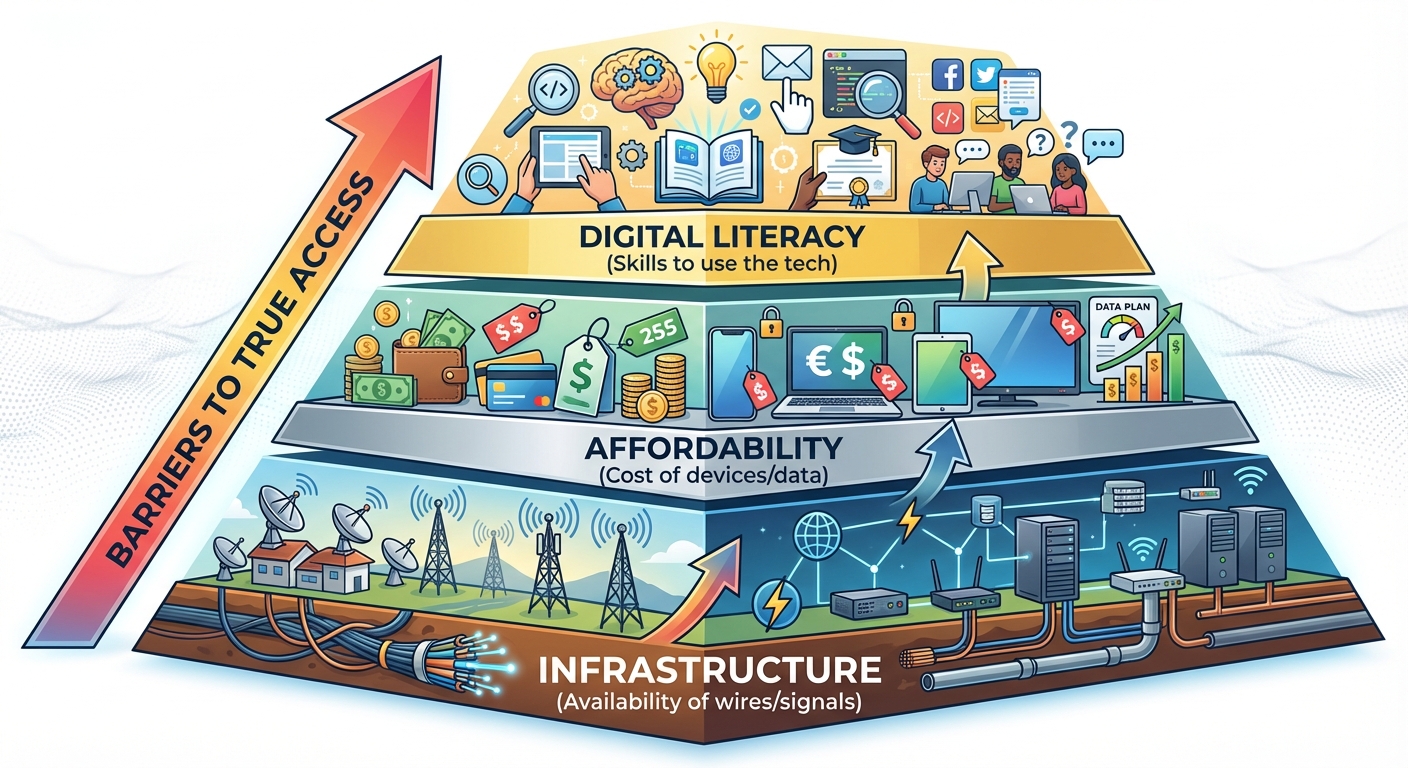

Not everyone has equal access to computing innovations. The Digital Divide refers to the gap between those who have access to computing devices and the internet and those who do not.

Factors Influencing the Divide

The divide is not just about who owns a laptop. It manifests via several factors:

- Socioeconomic Status: The cost of high-speed internet and up-to-date hardware restricts access for lower-income individuals.

- Geographic Location: Infrastructure differences between urban (cities), suburban, and rural areas. Rural areas often lack fiber-optic cables or 5G towers.

- Demographics: Differences in access based on age, race, gender, or disability status.

Impact of the Digital Divide

The Digital Divide affects more than just entertainment; it creates systemic inequities in society.

- Civic Participation: Many government services, voting information, and community forums are now exclusively online. Lack of access means lack of representation.

- Education: Students without internet access at home (the "Homework Gap") fall behind peers who have instant access to research tools.

- Employment: Most job applications and professional networking happen online.

Key Takeaway: The Internet is often viewed as a "great equalizer," but widespread access is required for that to be true. Organizations like One Laptop Per Child and government broadband initiatives aim to narrow this gap.

Computing Bias (Topic 5.3)

Computers are often assumed to be neutral because they operate on logic and math. However, computing systems frequently reflect the biases of their creators or the data they are fed. This is known as Algorithmic Bias.

Sources of Bias

Bias usually enters a system in one of three ways:

1. Bias in Data (MOST COMMON)

Machine learning (AI) tools "learn" by looking at historical data. If the history is biased, the future predictions will be biased.

- Example: A hiring algorithm is trained on 10 years of successful resumes. If the company mostly hired men in the past, the AI learns that "being male" is a trait of success and starts rejecting female candidates.

2. Bias in Algorithm Design

Programmers may inadvertently embed their own blind spots into the logic of the code.

- Example: A facial recognition system developed by a homogeneous team may work perfectly on their faces but fail to recognize faces of different skin tones because they didn't test for it.

3. Bias in Accessible Design

software designed without considering users with disabilities (e.g., lacking screen reader support or high-contrast modes).

Combating Bias

How do computer scientists reduce bias? (This is a frequent exam topic!)

- Diverse Teams: Developing software with a team diverse in gender, race, and background helps catch blind spots early.

- Crowdsourcing: gathering data or feedback from a large, open cross-section of the public ensuring a wider variety of inputs.

- Algorithmic Auditing: Regularly testing the software against different demographic groups to ensure fairness.

Real-World Example: Facial Recognition

Facial recognition software has historically had higher error rates for people of color. This becomes an ethical crisis when law enforcement uses these tools to identify suspects, leading to false arrests. This highlights how a technical failure (bias) becomes a social harm.

Common Mistakes & Pitfalls

- Confusing "Unintended" with "Bad": Students often assume unintended consequences are always negative. Remember the GPS example—contact tracing was unintended but positive.

- Thinking Code is Neutral: Never assume a computer decision is fair just because a machine made it. Always ask: "What data was used to train this?"

- Oversimplifying the Digital Divide: It is not just about having the internet; it is about the quality of access and the digital literacy required to use it effectively.

- Ignoring the Complexity of Bias: Bias is rarely malicious (intentional evil). It is usually a result of oversight or bad training data.

Summary Checklist

- [ ] Can you list one beneficial and one harmful effect of a specific technology (e.g., Social Media)?

- [ ] Can you explain how the Digital Divide affects a citizen's ability to vote or find a job?

- [ ] Can you identify how bias enters a Machine Learning system via training data?